Natural Code Just Works.

01/13/2012 § 2 Comments

As soon as I heard about ARC I thought “WOW, this is amazing! A compiler-time garbage collector! Why didn’t anyone think about this before!?”.

But then even after migrating to iOS 5 I got a little scared about changing the compiler and the whole memory management schema that I have been using my entire life. Actually I was waiting to being able to work on a new project so that I could start using these new concepts. It turns out I couldn’t wait anymore and decided to migrate this huge project I am working on to ARC. Not only for the promise of “running faster”, but also for education (in the end I love to explore and learn new ways of writing code).

After migrating the source code by using the automatic migration tool provided by Xcode 4.2.1 (and set the Simulator as deployment target hahahah) I was immediately able to see that natural code just works. That is the way Apple wants us to think about using ARC. And my impression tells me this is totally possible.

But I am a man full of questions about the meaning of life and all this crap, so I couldn’t just believe on that and started to watch some ARC talks Apple has done to comprehend how this magic works behind the scenes. Truth is I can’t live with something I don’t understand when it comes to coding.

Although ARC is pretty simple, here are some annotations I have made that really helped me to understand “da magic”.

First of all there are 5 things you cannot forget:

1) Strong references. Every variable is a strong reference and is implicit released after it’s scope ends. A strong reference is the same thing as a retained reference that you don’t manage. For example:

When you declare NSString *name; the compiler understands you actually meant __strong NSString *name;. And this means you don’t need to retain the reference nor release it afterwards anymore.

- (id)init { self = [super init]; if (self) { name = [@"Name" retain]; }return self;

}

- (void)dealloc { [name release];

[super dealloc];}

becomes

- (id)init { self = [super init]; if (self) { name = @"Name"; } return self;}

2) Autoreleasing References. Every out-parameter is already retained and autoreleased for you.

- (void)method: (NSObject **)param { *param = …; } means

- (void)method: (__autoreleasing NSObject **)param { *param = … retain] autorelease];

}

3) Unsafe references. If you see this, keep in mind you are working with a non-initialized, no-extra compiler logic and no restrictions variable. An unsafe reference tells ARC not to touch it and as a result what you get is the same as an assign property. The advantage here is: you can use this inside structs. But be warned this can easily dangling references.

__unsafe_unretained NSString *name = name; 4) Weak References. Works like an assign property, but becomes nil as soon as the object starts deallocation.

__weak NSString *name = name; If you want to create a reference weak, just add __weak before the variable declaration or weak to the property instead of the old assign parameter.

5) Return Values. They never transfer ownership (ARC does a retain and returns a autoreleased object for you) unless the selector starts with alloc, copy, init, mutableCopy or new. In these cases ARC returns a +1 reference (for you), which you also don’t need to bother with on the caller side due to the rules we discussed above.

Now that you know how ARC works and what it does, you can write natural code in peace =)

Multiple Video Playback on iOS

01/11/2012 § 44 Comments

As usual let me start by telling you a quick story on how this post came to be…

Once upon a time..NOT. So, I was working on this project for Hyundai at nKey when we got into this screen that requires two videos playing at the same time so the user could see how a car would behave with and without a feature (like Electronic Stability Control). As an experienced developer I immediately told the customer we should merge both videos so that we could play “both at the same time” in iOS. I explained him that in order to play videos on iOS, Apple has released a long time ago the MediaPlayer.framework which according to the docs (and real life :P) isn’t able to handle more than one video playback at a time (although you can have two MPMoviePlayerController instances).

He said OK and that was what we did. However the requirements changed and we had to add a background video that plays on a loop..aaand I started to have problems coordinating all these video playbacks so that only one was playing at a time and the user wouldn’t notice it.

Fortunately nKey sent me to the iOS Tech Talk that was happening on São Paulo/BR this very monday and there I got into a talk where the Media Technologies Evangelist, Eryk Vershen was discussing the AVFoundation.framework and how it is used by the MediaPlayer.framework (aka MPMoviePlayerController) for video playback. After the talk during Wine and Cheese I got to speak to Eryk about my issue and explained him how I was thinking about handling the problem. His answer was something like “Sure! Go for it! iOS surely is capable of playing multiple videos at the same time!…Oh…and I think something around 4 is the limit”. That answer made me happy and curious so I asked him why is the MediaPlayer.framework incapable of handling multiple video playback if that wasn’t a library limitation…he told me the MPMoviePlayerController was created to present cut-scenes on games early on…that this is why on previous iOS versions only fullscreen playback was allowed and that this limitation is a matter of legacy.

When I got back to my notebook I worked on this very basic version of a video player using the AVFoundation.framework (which obviously I made more robust when I got back to the office so that we could use it on the project).

Okdq, story told. Let’s get back to work!

The AVFoundation framework provides you the AVPlayer object to implement controllers and user interfaces for single- or multiple-item playback. The visual results generated by the AVPlayer object can be displayed in a CoreAnimation layer of class AVPlayerLayer. In AVFoundation timed audiovisual media such as videos and sounds are represented by an AVAsset object. According to the docs, each asset contains a collection of tracks that are intended to be presented or processed together, each of a uniform media type, including but not limited to audio, video, text, closed captions, and subtitles. Due to the nature of timed audiovisual media, upon successful initialization of an asset some or all of the values for its keys may not be immediately available. In order to avoid blocking the main thread, you can register your interest in particular keys and become notified when their values are available.

Having this in mind, subclass UIViewController and name this class VideoPlayerViewController. Just like the MPMoviePlayerController, let’s add a NSURL property that tells us from where we should grab our video. Like described above, add the following code to load the AVAsset once the URL is set.

#pragma mark - Public methods

- (void)setURL:(NSURL*)URL {

[_URL release];

_URL = [URL copy];

AVURLAsset *asset = [AVURLAsset URLAssetWithURL:_URL options:nil];

NSArray *requestedKeys = [NSArray arrayWithObjects:kTracksKey,

kPlayableKey, nil];

[asset loadValuesAsynchronouslyForKeys:requestedKeys

completionHandler: ^{ dispatch_async(

dispatch_get_main_queue(), ^{

[self prepareToPlayAsset:asset

withKeys:requestedKeys];

});

}];

}

- (NSURL*)URL {

return _URL;

}So, once the URL for the video is set we create an asset to inspect the resource referenced by the given URL and asynchronously load up the values for the asset keys “tracks” and “playable”. At loading completion we can operate on the AVPlayer on the main queue (the main queue is used to naturally ensure safe access to a player’s nonatomic properties while dynamic changes in playback state may be reported).

#pragma mark - Private methods- (void)prepareToPlayAsset: (AVURLAsset *)asset withKeys:(NSArray *)requestedKeys {for (NSString *thisKey in requestedKeys) { NSError *error = nil; AVKeyValueStatus keyStatus = [asset statusOfValueForKey:thisKey error:&error]; if (keyStatus == AVKeyValueStatusFailed) { return; } } if (!asset.playable) { return; } if (self.playerItem) { [self.playerItem removeObserver:self forKeyPath:kStatusKey]; [[NSNotificationCenter defaultCenter] removeObserver:self name:AVPlayerItemDidPlayToEndTimeNotification object:self.playerItem]; } self.playerItem = [AVPlayerItem playerItemWithAsset:asset]; [self.playerItem addObserver:self forKeyPath:kStatusKey options:NSKeyValueObservingOptionInitial | NSKeyValueObservingOptionNew context: AVPlayerDemoPlaybackViewControllerStatusObservationContext]; if (![self player]) { [self setPlayer:[AVPlayer playerWithPlayerItem:self.playerItem]]; [self.player addObserver:self forKeyPath:kCurrentItemKey options:NSKeyValueObservingOptionInitial | NSKeyValueObservingOptionNew context: AVPlayerDemoPlaybackViewControllerCurrentItemObservationContext]; } if (self.player.currentItem != self.playerItem) { [[self player] replaceCurrentItemWithPlayerItem:self.playerItem]; } }

At the completion of the loading of the values for all keys on the asset that we require, we check whether loading was successfull and whether the asset is playable. If so, we set up an AVPlayerItem (representation of the presentation state of an asset that’s played by an AVPlayer object) and an AVPlayer to play the asset. Note that I didn’t add any error handling at this point. Here we should probably create a delegate and let the view controller or whoever is using your player to decide what is the best way to handle the possible errors.

Also we added some key-value observers so that we are notified when our view should be tied to the player and when the the AVPlayerItem is ready to play.

#pragma mark - Key Valye Observing - (void)observeValueForKeyPath: (NSString*) path ofObject: (id)object change: (NSDictionary*)change context: (void*)context { if (context == AVPlayerDemoPlaybackViewControllerStatusObservation Context) { AVPlayerStatus status = [[change objectForKey: NSKeyValueChangeNewKey] integerValue]; if (status == AVPlayerStatusReadyToPlay) { [self.player play]; } } else if (context == AVPlayerDemoPlaybackViewControllerCurrentItem ObservationContext) { AVPlayerItem *newPlayerItem = [change objectForKey: NSKeyValueChangeNewKey]; if (newPlayerItem) { [self.playerView setPlayer:self.player]; [self.playerView setVideoFillMode: AVLayerVideoGravityResizeAspect]; } } else { [super observeValueForKeyPath:path ofObject: object change:change context:context]; } }

Once the AVPlayerItem is on place we are free to attach the AVPlayer to the player layer that displays visual output. We also make sure to preserve the video’s aspect ratio and fit the video within the layer’s bounds.

As soon as the AVPlayer is ready, we command it to play! iOS does the hard work 🙂

As I mentioned earlier, to play the visual component of an asset, you need a view containing an AVPlayerLayer layer to which the output of an AVPlayer object can be directed. This is how you subclass a UIView to meet the requirements:

@implementation VideoPlayerView + (Class)layerClass { return [AVPlayerLayer class]; } - (AVPlayer*)player { return [(AVPlayerLayer*)[self layer] player]; } - (void)setPlayer: (AVPlayer*)player { [(AVPlayerLayer*)[self layer] setPlayer:player]; } - (void)setVideoFillMode: (NSString *)fillMode { AVPlayerLayer *playerLayer = (AVPlayerLayer*)[self layer]; playerLayer.videoGravity = fillMode; } @end

And this is it!

Of course I didn’t add all the necessary code for building and running the project but I wouldn’t let you down! Go to GitHub and download the full source code!

3D Tag Cloud available on GitHub!

11/26/2011 § Leave a comment

Hey guys!

This time I bring very good news! The 3D Tag Cloud I created almost a year ago is finally a free software available on GitHub.

Yes, that is right. A lot of people is asking for some code sample after reading that tutorial so I decided to just make it available on GitHub as a free software under the terms of GNU General Public License version 3, so that you guys can use, redistribute or modify it at will.

Now it is your turn! Contribute!

Anti-aliasing rotated UIViews

10/01/2011 § 4 Comments

It is being a lot of time I don’t add a new post here, so here I am with a pretty quick post.

This history begins half year ago, when I worked on the Chatter app for iPad with my dear friend Didier Prophete. I worked on an anti-aliasing solution for a rotated image that I was pretty eager to publish but I couldn’t do it at the time.

Yesterday I was working on a Pinch Spread component (yes, just like Chatter does but without the pan part and for a different reason) at nKey for using on some of our apps, and I needed to use that old solution but I couldn’t remember it, so I had to come up with a simpler one since I was on a run due to other matters.

Going (finally) straight to the point, when you rotate an UIView it’s borders become serrated so that you need to anti-alias them in order to look good.

So my solution was to use a container view 5 pixels wider in order to reduce the serrated effect on the view I wanted to rotate – of course, you have to center the view on the container and then rotate the container view instead.

The next step is to add a 3 pixel transparent border to the inner view and rasterize it so that the pixels interpolate smoothing the border.

view.layer.borderWidth = 3;view.layer.borderColor =[UIColor clearColor].CGColor;view.layer.shouldRasterize = YES;

Now the final trick is to add some shadow. This will make the border look somehow more solid. In my case I added a lot of shadow because I wanted the shadow to be very visible (the view stack for the Pinch Spread looks sharper and prettier due to the shadow effect).

view.layer.shadowOffset = CGSizeMake(0, -1);view.layer.shadowOpacity = 1;view.layer.shadowColor = [UIColor blackColor].CGColor;

So, by using the solution above this is what you will get:

Although it is a pretty obvious and simple solution, I hope it helps you out (and now I have a reminder for the next time!).

Creating a 3D Tag Cloud

11/17/2010 § 33 Comments

I was bored last weekend, so I decided to do something slightly different. The first idea that came to my mind was a 3D sphere on which I could place any kind of view. Truth is that there is nothing better than a clear idea of what you want to do in order to achieve your goals. So, why not a Tag Cloud? It is simple, and at a very basic understanding, it is nothing more than a bunch of views distributed on a sphere.

Since I wanted to create it without using OpenGL ES, I took a moment to think about what would be the best way to implement this stuff using just the UIKit.

After not that much of thinking, I decided to evenly distribute points on a sphere and use these points as the center of each view. Obviously UIKit just works with 2 dimentions, so how to achieve the 3D aspect? Simple, let’s use the Z coordinate as the scale factor. But still, there would be smaller views (views that should be far from the screen) overlaying bigger views (the ones closer to the screen). This can be easily solved via z-index ordering (that is available in the standard SDK).

There will be no fun if we are not able to at least rotate our 3D Tag Cloud around every axis right? ^^

…

Once our goal is clear, the only remaining question is how to implement all this stuff.

Let’s revisit our requisites:

- Evenly distribute points in order to place our views on a sphere;

- Use the z coordinate to properly scale and order each view;

- Rotate each view around each axis so that we simulate a full sphere rotation;

There are a lot of algorithms out there to evenly distribute points on a sphere, but there would be no fun if we don’t really define and comprehend what is going on here. To begin with, what exactly are evenly distributed points?

Being very precise, we could say that to evenly distribute points on a sphere the resulting polygonal object defined by the points needs to have faces that are equal as well as an equal number of faces leading into every vertex, and this is what defines perfect shapes (or Platonic solids). The problem is that there is no perfect shape with more than 20 vertexes and since each vertex is a the center of a view, this would mean that we could only have 20 views (UILabels) on our cloud.

Therefore we need to think about “evenly” on another aspect. Let’s say that if any two closest points in the whole set are as far apart as possible from each other, all points are equally distant from each other.

Again, there is a bunch of algorithms for this (Golden Section Spiral, Staff and Kuijlaars, Davi’s Disco Ball and other variations). I tried a lot of them, and the one that fit better to my needs was the Golden Section Spiral, not just because I had better distribution results but also because I could easily define the number of vertexes I wanted.

What the Golden Section Spiral algorithm does is to choose successive longitudes according to the “most irrational number” so that no two nodes in nearby bands come too near from each other in longitude.

The implementation I came up with actually run this algorithm creating 3D points (that I called PFPoint and is exactly the same as a CGPoint but with an additional coordinate). These points are then added to an actual array so that we can use them later to properly place our views.

@implementation PFGoldenSectionSpiral

+ (NSArray *)sphere:(NSInteger)n {

NSMutableArray* result = [NSMutableArray arrayWithCapacity:n];

CGFloat N = n;

CGFloat h = M_PI * (3 - sqrt(5));

CGFloat s = 2 / N;

for (NSInteger k=0; k<N; k++) {

CGFloat y = k * s - 1 + (s / 2);

CGFloat r = sqrt(1 - y*y);

CGFloat phi = k * h;

PFPoint point = PFPointMake(cos(phi)*r, y, sin(phi)*r);

NSValue *v = [NSValue value:&point

withObjCType:@encode(PFPoint)];

[result addObject:v];

}

return result;

}

@end

This algorithm returns a list of points within [-1, 1] meaning that we will need to properly convert each coordinate to iOS coordinates. In our case, the z-coordinate needs to be converted to [0, 1], while x and y coordinates to [0, frame size].

So basically you can create an UIView subclass – that I called PFSphereView – and add a method – let’s say – setItems that receives a set of views to place within the sphere.

- (void)setItems:(NSArray *)items {

NSArray *spherePoints =

[PFGoldenSectionSpiral sphere:items.count];

for (int i=0; i<items.count; i++) {

PFPoint point;

NSValue *pointRep = [spherePoints objectAtIndex:i];

[pointRep getValue:&point];

UIView *view = [items objectAtIndex:i];

view.tag = i;

[self layoutView:view withPoint:point];

[self addSubview:view];

}

}

- (void)layoutView:(UIView *)view withPoint:(PFPoint)point {

CGFloat viewSize = view.frame.size.width;

CGFloat width = self.frame.size.width - viewSize*2;

CGFloat x = [self coordinateForNormalizedValue:point.x

withinRangeOffset:width];

CGFloat y = [self coordinateForNormalizedValue:point.y

withinRangeOffset:width];

view.center = CGPointMake(x + viewSize, y + viewSize);

CGFloat z = [self coordinateForNormalizedValue:point.z

withinRangeOffset:1];

view.transform = CGAffineTransformScale(

CGAffineTransformIdentity, z, z);

view.layer.zPosition = z;

}

- (CGFloat)coordinateForNormalizedValue:(CGFloat)normalizedValue

withinRangeOffset:(CGFloat)rangeOffset {

CGFloat half = rangeOffset / 2.f;

CGFloat coordinate = fabs(normalizedValue) * half;

if (normalizedValue > 0) {

coordinate += half;

} else {

coordinate = half - coordinate;

}

return coordinate;

}

Once the setItems method is called, we can generate a point for each view and layout that view by placing and scaling it according to the converted iOS coordinates. Now you may be able to create a view controller and instantiate the PFSphereView passing a bunch of UILabels to see how our 3D Tag Cloud looks like.

Unfortunately you can’t animate any kind of rotation yet, since we did not address it. And now is when the cool part comes into play.

We actually can’t use any 3D transformation available on the SDK since we don’t want to rotate our labels, but instead the whole sphere. How can we achieve this?

Well, to rotate our sphere all that we need to do is to rotate each point. Once a point is rotated, its coordinates changes on the cartesian plane and therefore each view will rotate around the desired axis in such a way that only its position and scale will change (not the actual rotation angle).

The achieved behavior is totally different from the result we would get by changing the anchorPoint to be the center of the sphere and use CATransform3DRotate, for example.

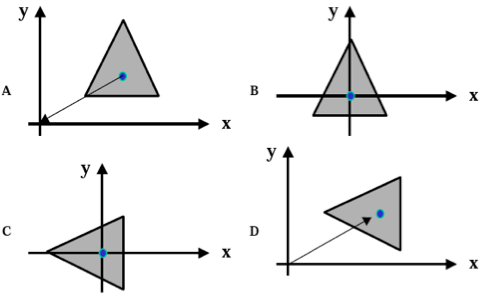

If you didn’t get exactly why, take a moment to draw some points on a 3D cartesian plane in some piece of paper. Imagine each point rotating around the center of the sphere so that we can actually see a sphere rotating. Then imagine every view – UILabel on our case – rotating around the very same point. Once you find out what is the difference, continue to read this post.

….

Time to rotate our sphere.

The basic idea behind transformations such as rotations on 3D space, is to think of our views as a bunch of points or vectors where we apply some math and project the results back into the 3 dimensional space. A very efficient way to do so, is to use a homogeneous coordinate representation that maps a point on a n-dimensional space into another on the (n+1)-dimensional space, so that we can represent any point or geometric transformations using only matrixes.

To apply a transformation to a point, we need to multiply two matrixes: the point and the transformation matrix.

Computer Graphics taught us that every transformation can be achieved by multiplying a matrix set. This knowledge allows not only allows us to apply the very basic form of rotation, but also to concatenate transformations in order to achieve a “real world” rotation. For example, if we want to rotate an object around its center we need to translate that object to the origin, rotate it and then translate it back to its original position.

And that is what we are going to do with each of our points.

If you don’t have a basic understanding of geometric transformations you should read this topic about rotation to get familiar with terms and matrixes used here.

Now that we know the sequence of step we need to take in order to achieve rotation around a given point on a given axis, we just need to get down into math. Let’s do that using code.

So, there are three kinds of primitive geometric transformations that can be combined to achieve any geometric transformation: rotation, translate and scaling.

We saw that we can combine translations and rotations (and that order matters…just look at the figure and try achieve D not following the order A-B-C-D) into only one matrix and multiply our point by this matrix to retrieve a new point that is previous point rotated around an arbitrary point. But how do we define which axis we are rotation about or how do we tell what is a translation and what is a rotation?

Actually there is a set of primitive matrixes defined for each primitive geometric transformation. Bellow I provide useful matrixes for our goal: translation and rotation for each axis (x, y and z).

static PFMatrix PFMatrixTransform3DMakeTranslation(PFPoint point) {

CGFloat T[4][4] = {

{1, 0, 0, 0},

{0, 1, 0, 0},

{0, 0, 1, 0},

{point.x, point.y, point.z, 1}

};

PFMatrix matrix = PFMatrixMakeFromArray(4, 4, *T);

return matrix;

}

static PFMatrix PFMatrixTransform3DMakeXRotation(PFRadian angle) {

CGFloat c = cos(PFRadianMake(angle));

CGFloat s = sin(PFRadianMake(angle));

CGFloat T[4][4] = {

{1, 0, 0, 0},

{0, c, s, 0},

{0, -s, c, 0},

{0, 0, 0, 1}

};

PFMatrix matrix = PFMatrixMakeFromArray(4, 4, *T);

return matrix;

}

static PFMatrix PFMatrixTransform3DMakeYRotation(PFRadian angle) {

CGFloat c = cos(PFRadianMake(angle));

CGFloat s = sin(PFRadianMake(angle));

CGFloat T[4][4] = {

{c, 0, -s, 0},

{0, 1, 0, 0},

{s, 0, c, 0},

{0, 0, 0, 1}

};

PFMatrix matrix = PFMatrixMakeFromArray(4, 4, *T);

return matrix;

}

static PFMatrix PFMatrixTransform3DMakeZRotation(PFRadian angle) {

CGFloat c = cos(PFRadianMake(angle));

CGFloat s = sin(PFRadianMake(angle));

CGFloat T[4][4] = {

{c, s, 0, 0},

{-s, c, 0, 0},

{0, 0, 1, 0},

{0, 0, 0, 1}

};

PFMatrix matrix = PFMatrixMakeFromArray(4, 4, *T);

return matrix;

}

It would be nice of you to properly research around the math behind these matrixes (This post would be too long if I included a proper explanation about “where the hell does this matrix set came from??” and you probably would not read this post until the very end 😉 [BTW, I am surprised you got here])

As you may have noticed I created a bunch of representations and helpers to make the code really simple and straightforward. But I don’t think that you need me to provide that code (matrix representation for example), so I will keep speaking of what really matters. Anyways, you can always reach me via e-mail if you want some sort of code.

Ok….we already have a specific matrix to rotate a point around any axis and also one to translate it. How are we supposed to use these matrixes to rotate a point around another arbitrary point?

Since the “the best way to teach is by example”….

Let’s say that you want to rotate (1,1,1) around (0,1,1) by 45 degrees on the x axis. In this case the code looks like:

static PFMatrix PFMatrixTransform3DMakeXRotationOnPoint(PFPoint point,

PFRadian angle) {

PFMatrix T = PFMatrixTransform3DMakeTranslation(

PFPointMake(-point.x, -point.y, -point.z));

PFMatrix R = PFMatrixTransform3DMakeXRotation(angle);

PFMatrix T1 = PFMatrixTransform3DMakeTranslation(point);

return PFMatrixMultiply(PFMatrixMultiply(T, R), T1);

}

PFMatrix coordinate = PFMatrixMakeFromPFPoint(PFPointMake(1,1,1));

PFMatrix transform = PFMatrixTransform3DMakeXRotationOnPoint(

PFPointMake(0,1,1), 45);

PFMatrix transformedCoordinate = PFMatrixMultiply(coordinate,

transform);

PFPoint result = PFPointMakeFromMatrix(transformedCoordinate);

Ok….What the heck is going on!?

First of all we have to create a matrix from our point (UILabel.center) in order to be able to multiply the point by our geometric transformation. This transformation, in turn, consists of 3 primitive transformations.

The first one translates the point to the origin, and that is why we are building the Translate matrix using (-x,-y,-z). Then the second one builds a rotation of 45 degrees around the x axis.

As I explained before, these two transformations are concatenated through multiplication.

And since we want to rotate it around the “point” and not around “origin”, we translate it back to “point” by multiplying the resulting matrix by a second Translate matrix using (x, y, z).

That composite transformation is then multiplied by our point (UILabel.center) matrix representation. This gives us as a result a third matrix, from which we extract a point representation.

At this moment you should be thinking that “result” point will be our next center and scale representation for one of our labels. If you thought this you are right!

The basic idea now is to listen for gestures (using UIPanGestureRecognizer or UIRotationGestureRecognizer) to select which rotation matrix will be used and what angle you will pass to PFMatrixTransform3DMake(Axis)RotationOnPoint. Once you selected the matrix and found out the proper angle (based on the locationInView method from your gesture recognizer or even using a constant) you just need to iterate through every UILabel and call layoutView:onPoint: just like we did in the setItems method.

To don’t take from you all the fun, I will let you handle the gestures 😉

By the way, below is how your sphere should look like using this code (of course I added some code to actually handle gestures!).

Try to use [0.1, 1] instead of [0,1] for the z coordinate interval and play with view sizes to make it look how you would like to.

Hope you enjoy and share your thoughts!

TThree20: A Brief TTLauncherView tutorial

10/19/2010 § 35 Comments

The TTLauncherView is a very simple UI component in the sense of use, that basically mimics the iOS home screen. It comes with a scroll view and a page control that enables you to browse through a set of TTLauncherItems. Each of these items can be reordered, deleted or even have a badge number, just like application badges on the iOS home screen.

I already worked on a lot of applications that having a “home screen behavior” would be great. The Facebook guys found a very neat way to provide this behavior for custom application developers. They just made it a UI component with some delegate methods, just like every other standard component and of course, it works pretty well with their navigation model.

It is so simple, that you just have to create your view controller, append a TTLauncherView to it and attach your TTLauncherItems. If you want to provide a more complete behavior, just implement the TTLauncherViewDelegate and there you go.

A Few Programming Practices: Writing good code

09/13/2010 § 1 Comment

Obviously that much of what I will speak about here is kind of a convention between Cocoa developers, some may not be, but certainly are details that I believe that makes the code more readable.

First of all we write Objective-C code, meaning that our parameters are named. This just happens to be one of our most valuable tools for writing readable code. Don’t be shy to come up with long names (Apple does that too), that is precisely the reason we have named parameters. Be clear.

The second important tip is to keep your code simple. You must have heard this phrase a lot, but maybe no one told you what they wanted to tell when using it 😛

Provisioning unveiled

09/08/2010 § 1 Comment

I remember the first time I got to provision a device to run my application (about a year ago). And it was complicated to get the idea behind all the provisioning process.

This is precisely why I decided to write about it. Maybe you are lucky enough to read this before your journey.

View Management Cycle reviewed

09/07/2010 § Leave a comment

Almost all developers when get a little more experienced, don’t stop to carefully read all the documentation or to think about what they are already used to do everyday.

But I myself already stopped to think and discuss with my team how we are supposed to handle some issues, and between these issues there is a very simple one: How are we really supposed to use methods like init, loadView, viewDidLoad, didReceiveMemoryWarning, viewDidUnload and dealloc from our view controllers?

After discussing a lot and obviously checking the documentation, these are my thoughts about this topic: