Creating a 3D Tag Cloud

11/17/2010 § 33 Comments

I was bored last weekend, so I decided to do something slightly different. The first idea that came to my mind was a 3D sphere on which I could place any kind of view. Truth is that there is nothing better than a clear idea of what you want to do in order to achieve your goals. So, why not a Tag Cloud? It is simple, and at a very basic understanding, it is nothing more than a bunch of views distributed on a sphere.

Since I wanted to create it without using OpenGL ES, I took a moment to think about what would be the best way to implement this stuff using just the UIKit.

After not that much of thinking, I decided to evenly distribute points on a sphere and use these points as the center of each view. Obviously UIKit just works with 2 dimentions, so how to achieve the 3D aspect? Simple, let’s use the Z coordinate as the scale factor. But still, there would be smaller views (views that should be far from the screen) overlaying bigger views (the ones closer to the screen). This can be easily solved via z-index ordering (that is available in the standard SDK).

There will be no fun if we are not able to at least rotate our 3D Tag Cloud around every axis right? ^^

…

Once our goal is clear, the only remaining question is how to implement all this stuff.

Let’s revisit our requisites:

- Evenly distribute points in order to place our views on a sphere;

- Use the z coordinate to properly scale and order each view;

- Rotate each view around each axis so that we simulate a full sphere rotation;

There are a lot of algorithms out there to evenly distribute points on a sphere, but there would be no fun if we don’t really define and comprehend what is going on here. To begin with, what exactly are evenly distributed points?

Being very precise, we could say that to evenly distribute points on a sphere the resulting polygonal object defined by the points needs to have faces that are equal as well as an equal number of faces leading into every vertex, and this is what defines perfect shapes (or Platonic solids). The problem is that there is no perfect shape with more than 20 vertexes and since each vertex is a the center of a view, this would mean that we could only have 20 views (UILabels) on our cloud.

Therefore we need to think about “evenly” on another aspect. Let’s say that if any two closest points in the whole set are as far apart as possible from each other, all points are equally distant from each other.

Again, there is a bunch of algorithms for this (Golden Section Spiral, Staff and Kuijlaars, Davi’s Disco Ball and other variations). I tried a lot of them, and the one that fit better to my needs was the Golden Section Spiral, not just because I had better distribution results but also because I could easily define the number of vertexes I wanted.

What the Golden Section Spiral algorithm does is to choose successive longitudes according to the “most irrational number” so that no two nodes in nearby bands come too near from each other in longitude.

The implementation I came up with actually run this algorithm creating 3D points (that I called PFPoint and is exactly the same as a CGPoint but with an additional coordinate). These points are then added to an actual array so that we can use them later to properly place our views.

@implementation PFGoldenSectionSpiral

+ (NSArray *)sphere:(NSInteger)n {

NSMutableArray* result = [NSMutableArray arrayWithCapacity:n];

CGFloat N = n;

CGFloat h = M_PI * (3 - sqrt(5));

CGFloat s = 2 / N;

for (NSInteger k=0; k<N; k++) {

CGFloat y = k * s - 1 + (s / 2);

CGFloat r = sqrt(1 - y*y);

CGFloat phi = k * h;

PFPoint point = PFPointMake(cos(phi)*r, y, sin(phi)*r);

NSValue *v = [NSValue value:&point

withObjCType:@encode(PFPoint)];

[result addObject:v];

}

return result;

}

@end

This algorithm returns a list of points within [-1, 1] meaning that we will need to properly convert each coordinate to iOS coordinates. In our case, the z-coordinate needs to be converted to [0, 1], while x and y coordinates to [0, frame size].

So basically you can create an UIView subclass – that I called PFSphereView – and add a method – let’s say – setItems that receives a set of views to place within the sphere.

- (void)setItems:(NSArray *)items {

NSArray *spherePoints =

[PFGoldenSectionSpiral sphere:items.count];

for (int i=0; i<items.count; i++) {

PFPoint point;

NSValue *pointRep = [spherePoints objectAtIndex:i];

[pointRep getValue:&point];

UIView *view = [items objectAtIndex:i];

view.tag = i;

[self layoutView:view withPoint:point];

[self addSubview:view];

}

}

- (void)layoutView:(UIView *)view withPoint:(PFPoint)point {

CGFloat viewSize = view.frame.size.width;

CGFloat width = self.frame.size.width - viewSize*2;

CGFloat x = [self coordinateForNormalizedValue:point.x

withinRangeOffset:width];

CGFloat y = [self coordinateForNormalizedValue:point.y

withinRangeOffset:width];

view.center = CGPointMake(x + viewSize, y + viewSize);

CGFloat z = [self coordinateForNormalizedValue:point.z

withinRangeOffset:1];

view.transform = CGAffineTransformScale(

CGAffineTransformIdentity, z, z);

view.layer.zPosition = z;

}

- (CGFloat)coordinateForNormalizedValue:(CGFloat)normalizedValue

withinRangeOffset:(CGFloat)rangeOffset {

CGFloat half = rangeOffset / 2.f;

CGFloat coordinate = fabs(normalizedValue) * half;

if (normalizedValue > 0) {

coordinate += half;

} else {

coordinate = half - coordinate;

}

return coordinate;

}

Once the setItems method is called, we can generate a point for each view and layout that view by placing and scaling it according to the converted iOS coordinates. Now you may be able to create a view controller and instantiate the PFSphereView passing a bunch of UILabels to see how our 3D Tag Cloud looks like.

Unfortunately you can’t animate any kind of rotation yet, since we did not address it. And now is when the cool part comes into play.

We actually can’t use any 3D transformation available on the SDK since we don’t want to rotate our labels, but instead the whole sphere. How can we achieve this?

Well, to rotate our sphere all that we need to do is to rotate each point. Once a point is rotated, its coordinates changes on the cartesian plane and therefore each view will rotate around the desired axis in such a way that only its position and scale will change (not the actual rotation angle).

The achieved behavior is totally different from the result we would get by changing the anchorPoint to be the center of the sphere and use CATransform3DRotate, for example.

If you didn’t get exactly why, take a moment to draw some points on a 3D cartesian plane in some piece of paper. Imagine each point rotating around the center of the sphere so that we can actually see a sphere rotating. Then imagine every view – UILabel on our case – rotating around the very same point. Once you find out what is the difference, continue to read this post.

….

Time to rotate our sphere.

The basic idea behind transformations such as rotations on 3D space, is to think of our views as a bunch of points or vectors where we apply some math and project the results back into the 3 dimensional space. A very efficient way to do so, is to use a homogeneous coordinate representation that maps a point on a n-dimensional space into another on the (n+1)-dimensional space, so that we can represent any point or geometric transformations using only matrixes.

To apply a transformation to a point, we need to multiply two matrixes: the point and the transformation matrix.

Computer Graphics taught us that every transformation can be achieved by multiplying a matrix set. This knowledge allows not only allows us to apply the very basic form of rotation, but also to concatenate transformations in order to achieve a “real world” rotation. For example, if we want to rotate an object around its center we need to translate that object to the origin, rotate it and then translate it back to its original position.

And that is what we are going to do with each of our points.

If you don’t have a basic understanding of geometric transformations you should read this topic about rotation to get familiar with terms and matrixes used here.

Now that we know the sequence of step we need to take in order to achieve rotation around a given point on a given axis, we just need to get down into math. Let’s do that using code.

So, there are three kinds of primitive geometric transformations that can be combined to achieve any geometric transformation: rotation, translate and scaling.

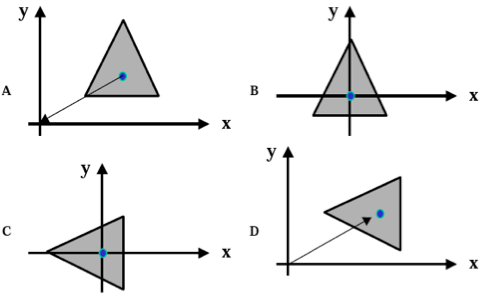

We saw that we can combine translations and rotations (and that order matters…just look at the figure and try achieve D not following the order A-B-C-D) into only one matrix and multiply our point by this matrix to retrieve a new point that is previous point rotated around an arbitrary point. But how do we define which axis we are rotation about or how do we tell what is a translation and what is a rotation?

Actually there is a set of primitive matrixes defined for each primitive geometric transformation. Bellow I provide useful matrixes for our goal: translation and rotation for each axis (x, y and z).

static PFMatrix PFMatrixTransform3DMakeTranslation(PFPoint point) {

CGFloat T[4][4] = {

{1, 0, 0, 0},

{0, 1, 0, 0},

{0, 0, 1, 0},

{point.x, point.y, point.z, 1}

};

PFMatrix matrix = PFMatrixMakeFromArray(4, 4, *T);

return matrix;

}

static PFMatrix PFMatrixTransform3DMakeXRotation(PFRadian angle) {

CGFloat c = cos(PFRadianMake(angle));

CGFloat s = sin(PFRadianMake(angle));

CGFloat T[4][4] = {

{1, 0, 0, 0},

{0, c, s, 0},

{0, -s, c, 0},

{0, 0, 0, 1}

};

PFMatrix matrix = PFMatrixMakeFromArray(4, 4, *T);

return matrix;

}

static PFMatrix PFMatrixTransform3DMakeYRotation(PFRadian angle) {

CGFloat c = cos(PFRadianMake(angle));

CGFloat s = sin(PFRadianMake(angle));

CGFloat T[4][4] = {

{c, 0, -s, 0},

{0, 1, 0, 0},

{s, 0, c, 0},

{0, 0, 0, 1}

};

PFMatrix matrix = PFMatrixMakeFromArray(4, 4, *T);

return matrix;

}

static PFMatrix PFMatrixTransform3DMakeZRotation(PFRadian angle) {

CGFloat c = cos(PFRadianMake(angle));

CGFloat s = sin(PFRadianMake(angle));

CGFloat T[4][4] = {

{c, s, 0, 0},

{-s, c, 0, 0},

{0, 0, 1, 0},

{0, 0, 0, 1}

};

PFMatrix matrix = PFMatrixMakeFromArray(4, 4, *T);

return matrix;

}

It would be nice of you to properly research around the math behind these matrixes (This post would be too long if I included a proper explanation about “where the hell does this matrix set came from??” and you probably would not read this post until the very end 😉 [BTW, I am surprised you got here])

As you may have noticed I created a bunch of representations and helpers to make the code really simple and straightforward. But I don’t think that you need me to provide that code (matrix representation for example), so I will keep speaking of what really matters. Anyways, you can always reach me via e-mail if you want some sort of code.

Ok….we already have a specific matrix to rotate a point around any axis and also one to translate it. How are we supposed to use these matrixes to rotate a point around another arbitrary point?

Since the “the best way to teach is by example”….

Let’s say that you want to rotate (1,1,1) around (0,1,1) by 45 degrees on the x axis. In this case the code looks like:

static PFMatrix PFMatrixTransform3DMakeXRotationOnPoint(PFPoint point,

PFRadian angle) {

PFMatrix T = PFMatrixTransform3DMakeTranslation(

PFPointMake(-point.x, -point.y, -point.z));

PFMatrix R = PFMatrixTransform3DMakeXRotation(angle);

PFMatrix T1 = PFMatrixTransform3DMakeTranslation(point);

return PFMatrixMultiply(PFMatrixMultiply(T, R), T1);

}

PFMatrix coordinate = PFMatrixMakeFromPFPoint(PFPointMake(1,1,1));

PFMatrix transform = PFMatrixTransform3DMakeXRotationOnPoint(

PFPointMake(0,1,1), 45);

PFMatrix transformedCoordinate = PFMatrixMultiply(coordinate,

transform);

PFPoint result = PFPointMakeFromMatrix(transformedCoordinate);

Ok….What the heck is going on!?

First of all we have to create a matrix from our point (UILabel.center) in order to be able to multiply the point by our geometric transformation. This transformation, in turn, consists of 3 primitive transformations.

The first one translates the point to the origin, and that is why we are building the Translate matrix using (-x,-y,-z). Then the second one builds a rotation of 45 degrees around the x axis.

As I explained before, these two transformations are concatenated through multiplication.

And since we want to rotate it around the “point” and not around “origin”, we translate it back to “point” by multiplying the resulting matrix by a second Translate matrix using (x, y, z).

That composite transformation is then multiplied by our point (UILabel.center) matrix representation. This gives us as a result a third matrix, from which we extract a point representation.

At this moment you should be thinking that “result” point will be our next center and scale representation for one of our labels. If you thought this you are right!

The basic idea now is to listen for gestures (using UIPanGestureRecognizer or UIRotationGestureRecognizer) to select which rotation matrix will be used and what angle you will pass to PFMatrixTransform3DMake(Axis)RotationOnPoint. Once you selected the matrix and found out the proper angle (based on the locationInView method from your gesture recognizer or even using a constant) you just need to iterate through every UILabel and call layoutView:onPoint: just like we did in the setItems method.

To don’t take from you all the fun, I will let you handle the gestures 😉

By the way, below is how your sphere should look like using this code (of course I added some code to actually handle gestures!).

Try to use [0.1, 1] instead of [0,1] for the z coordinate interval and play with view sizes to make it look how you would like to.

Hope you enjoy and share your thoughts!